Discover Mercor AI interview insights: process breakdown, common questions, tips, and real candidate experiences.

Mercor AI interview experience is becoming a common first gate in hiring: candidates describe short, AI-driven sessions that scan your CV and ask adaptive follow-ups, often on a tight clock. This post explains what typical Mercor AI interview experience looks like, why it matters, and exactly how to prepare — with templates, troubleshooting tips, and a practice regimen you can use today [candidate report, medical role notes, and practical prep tips].(Jointaro report, medical role account, practical prep blog)

How does mercor ai interview experience differ from a human interview

Mercor AI interview experience is usually shorter and more text-driven than a human-led call. Candidates report single sessions of ~20 minutes focused on domain questions, case examples, and explicit evaluation criteria. The AI adapts follow-ups from CV text and prior answers, meaning it favors parseable signals: keywords, clear role titles, and numeric outcomes. Unlike humans, an AI may cut answers short or pivot quickly to unexpected resume items because it reads text literally rather than inferring context [Jointaro; StartupStash].

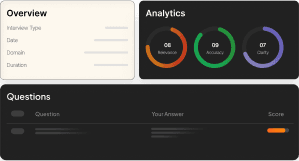

What should you expect during a mercor ai interview experience

Expect a timed, adaptive interview: a short intro, 8–15 focused prompts, and follow-ups guided by your CV. For technical or medical roles the AI often asks:

- Domain expertise checks

- One recent case/project with methods and metrics

- LLM/application design or evaluation criteria for AI roles Sessions frequently request explicit metrics or rubrics; prepare to state success thresholds, latency numbers, or clinical adoption rates when relevant [Mozibox; StartupStash].

How should you revise your resume for mercor ai interview experience

Make your resume AI-friendly:

- Front-load role titles and domain keywords in the first line of each entry.

- Add 1–2 bullet lines with your biggest domain achievement and a clear metric (e.g., “reduced inference latency by 40% (C++ refactor)”).

- Avoid brief unrelated experiences that could derail the AI’s focus; either contextualize them or remove them so the AI won’t latch onto noisy text [Jointaro; StartupStash].

How should you frame answers for mercor ai interview experience

Switch from STAR for humans to CLAIM → EVIDENCE → METRIC for AI:

- CLAIM: One-sentence summary (“Led X to achieve Y”).

- EVIDENCE: One concise technique or decision (the how).

- METRIC: A numeric outcome or evaluation rubric (the result). This structure keeps answers parseable, minimizes cut-offs, and supplies the explicit signals AI systems seek, especially for LLM and product questions [Mozibox].

What sample questions and model answers fit mercor ai interview experience

Sample prompts and short models:

- “Describe a recent latency reduction you led.” MODEL: Claim: “I cut model latency 40%.” Evidence: “Refactored C++ serving + batching.” Metric: “40% lower median latency; 99th < 250ms.”

- “How would you evaluate an LLM for clinical triage?” MODEL: Claim: “Use accuracy + safety metrics.” Evidence: “Validate on held-out dataset; adversarial safety tests.” Metric: “Target 90% F1, <1% harmful suggestions, clinician adoption >30% pilot.” Use the CLAIM → EVIDENCE → METRIC template for each answer.

How can you practice for a mercor ai interview experience

Simulate the interview with these steps:

1. Paste your CV into an LLM and ask it to generate 15 role-specific follow-ups and 5 cross-domain probes. Time yourself answering in 20-minute blocks.

2. Record brief, 60–90 second CLAIM→EVIDENCE→METRIC responses and replay to self-assess clarity and concision.

3. Track improvement metrics: percent of answers with explicit metrics, average time-to-coherent answer (<90s goal), and transcript clarity scores. Practical tips are available in candidate write-ups and prep guides [StartupStash].

What common problems occur in mercor ai interview experience and how can you fix them

Common issues

- AI focuses on unexpected CV items: sanitize or contextualize brief entries.

- Abrupt cut-offs: lead with claim and metric, then offer to expand.

- Vague evaluation criteria: always state the success metric you’d use.

- Keyword stuffing: pair each keyword with a quantified result to avoid shallow signals [Jointaro; Mozibox].

Short corrective phrases if the AI veers off-topic:

- “To confirm, are you asking about my web project experience or my recent ML work?”

- “Brief answer first: X. I can expand if you want the technical steps.”

How should you follow up after a mercor ai interview experience

After the session:

- Ask the recruiter for a transcript or a single clarifying follow-up.

- Send a concise follow-up email restating one strong example you didn’t finish: one sentence claim, one evidence bullet, one metric.

- If possible, request a human review if the AI’s feedback seems off — processes vary and human context can correct surface-level misreads [Jointaro].

How can you measure improvement for mercor ai interview experience

Track:

- Time-to-coherent answer (goal <90s).

- Percent of answers that include an explicit metric.

- Mock-interview pass rate (advance to human round).

- Recruiter feedback and eventual hiring outcomes as the ultimate signal.

How Can Verve AI Copilot Help You With mercor ai interview experience

Verve AI Interview Copilot can simulate Mercor-style sessions, giving you targeted practice and feedback. Verve AI Interview Copilot creates adaptive question sets from your CV, lets you time answers, and rates CLAIM→EVIDENCE→METRIC usage. Use Verve AI Interview Copilot to rehearse 20-minute blocks, receive coaching cues, and iterate on your answers. Learn more and try guided simulations at https://vervecopilot.com

What quick checklist and cheat sheet should I use for mercor ai interview experience

Pre-interview resume checklist

- Role title front-loaded and domain keywords present.

- One clear, metric-driven bullet per role.

- Remove or contextualize short unrelated entries.

3-minute case structure (copyable)

- 30s intro: “I’m a [role, years] specializing in [domain]; recent project X achieved Y.”

- 90s core: CLAIM → EVIDENCE → METRIC.

- 30s wrap: “Key lesson and evaluation rubric.”

Follow-up email template

- Subject: Quick follow-up on our session

- Body: One-sentence example I couldn’t finish, 1 evidence line, metric, offer to discuss details.

Limitations and fairness note Candidate anecdotes vary and processes evolve; Mercor’s implementation may differ by role and region. AI systems can overweight textual signals — sanitize your surface signals and ask for human review when needed [Jointaro; Mozibox].

Sources

- Candidate technical account and CV-scan anecdote: Jointaro

- Medical role experience emphasizing metrics and rubrics: Mozibox

- Practical prep and simulation advice: StartupStash

Ready to turn this into a printable 20‑minute practice script or a one‑page checklist I can customize for your role? Tell me your target role and I’ll produce tailored templates.

Kevin Durand

Career Strategist